Loome Overview

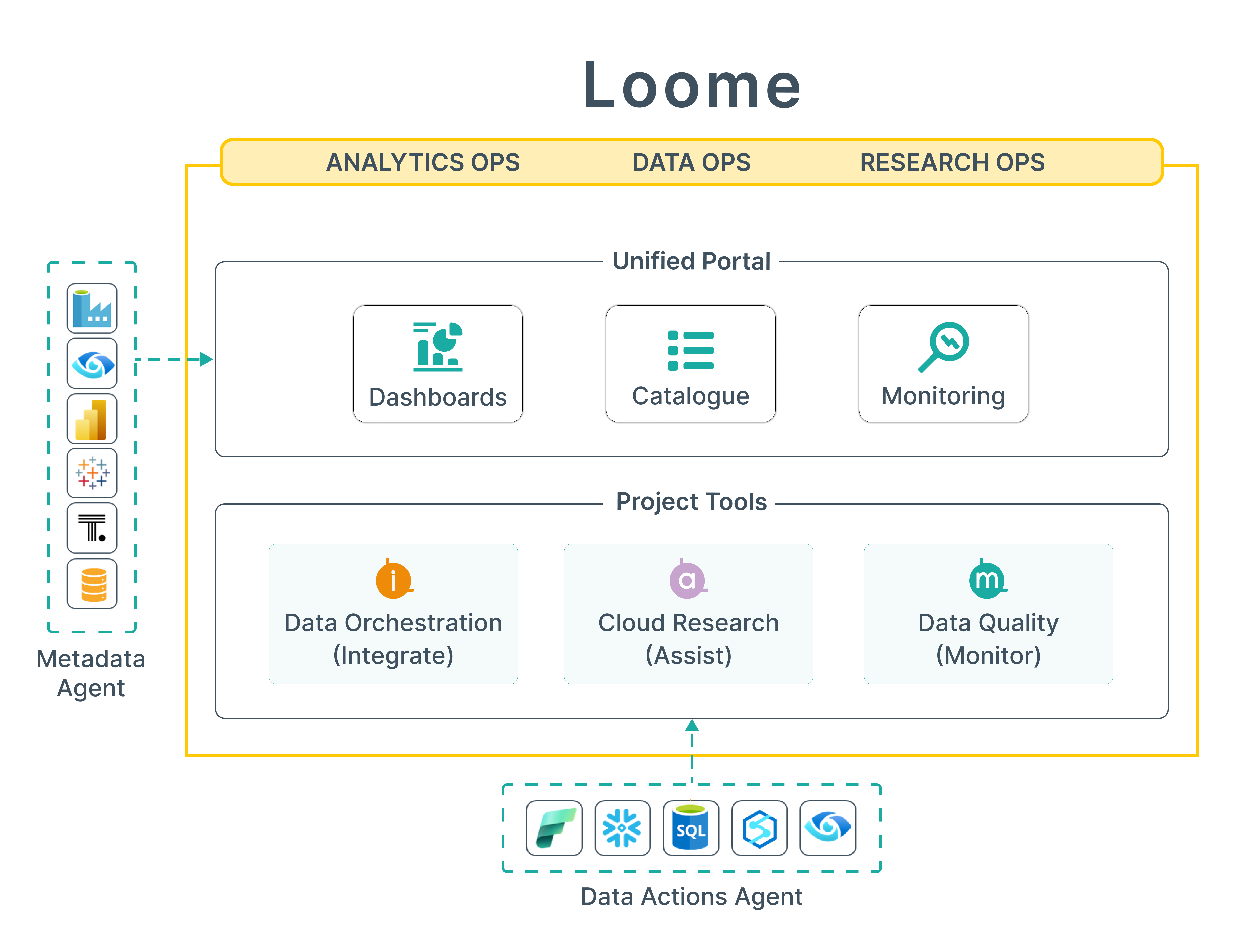

Loome is a Unified Analytics Ops Platform that presents a comprehensive suite of capabilities.

Loome for Analytics Ops

The lifecycle of report publishing including provision of a data glossary and business-friendly portal.

Analytics Ops streamlines the management and delivery of analytics assets through centralized governance and discovery. Loome provides the platform that transforms fragmented analytics resources into an organized, accessible ecosystem.

Loome for Data Ops

Manage the reliability of data pipelines, including proactive data quality alerts and performance monitoring across multiple teams.

Data Ops combines proven engineering principles, automation, and structured workflows. Loome provides the platform that makes this possible - turning good data practices into reliable processes.

Loome for Research Ops

Allow staff to manage their own cloud resources, set budgets, and collaborate on projects, within a governed framework of endorsed configurations.

Research Ops optimizes research workflows and infrastructure through standardized processes and governed self-service. Loome provides the platform that transforms research infrastructure into frictionless, governed environments.

The Loome Toolkit

Loome provides key operational tools to help teams collaborate on creating new assets. You can find these on the Apps menu.

Loome Portal

The Unified Portal provides a single point of access for all staff in the organization, ranging from roles that are actively involved in building and supporting new data assets, and business staff who search for and consume visualizations such as dashboards and reports.

The Catalogue, is a list of all “assets” that exist in the organization. An asset may be a visualization, data pipeline, data quality rule or cloud resource. The asset catalogue is populated using the inbuilt “metadata agent” which connects to your visualization tools and ETL tools. To configure a new source of the catalogue navigate to the Sources menu. The catalogue can be extended with your own custom attributes and tags on the Metadata menu.

Dashboards allow you to create landing pages that allow you to mashup different types of content including text, images and html, analytics visualizations and summary metrics of the catalogue. These can be audience targeted so you can highlight relevant content to different teams.

The monitoring capability of Activities allows you to keep track of time series information relating to assets in the catalogue: - For Data Pipelines, the execution time of jobs and tasks - For Cloud Resources, the compute time and cost - For Data Quality Rules, the number of outstanding items or anomalies to resolve - For Sources, the status of metadata synchronization jobs

.Loome Integrate

Integrate helps you to quickly and easily copy data from data platforms such as SQL Server, Snowflake, Microsoft Fabric, Azure Storage and Google Big Query and execute processing across multiple ETL tools such as Azure Data Factory, DataBricks and SSIS as well as other operations such as Azure Batch, Stored Procedures, PowerShell and Azure Functions.

.Loome Assist

Assist helps you to provision compute and storage resources, including High-Powered Workstations and HPC clusters that can be easily turned on and off as needed, with clear transparency of costs against budget.

.Loome Monitor

Monitor allows you to create data quality rules that scan for exceptions and sends alerts to data stewards or staff who are responsible for capturing data. Reference Data rules can be created to allow you to transition from your disparate spreadsheets to a central online capture of additional attributes and configuration data required for your analytics data model.

.Get Started

You can sign up for Loome at https://loomesoftware.com/start-loome-trial.html.

To get started with these key operational tools you’ll then need to set up a Data Actions Agent that is installed in your environment and connects to your data sources and cloud platform.